How Ready Is Your Finance Team for AI? A Scoring Framework Across Eight Dimensions.

A Centric study published in early 2026 found that organizations performing internal AI readiness assessments consistently overestimate their scores by 0.5 to 1.0 points compared to external evaluations. In a five-point scale, that's the difference between "we're ready to pilot" and "we need six months of data cleanup first." For finance teams specifically, the overestimation tends to cluster around data quality and governance, the two dimensions where confidence runs furthest ahead of reality.

The problem with most AI readiness assessments is that they're designed for the enterprise as a whole. They ask about your cloud infrastructure, your data science team headcount, your innovation culture. None of that tells a CFO whether their close process can absorb AI-assisted reconciliation next quarter. A finance-specific lens, like the one outlined in our guide to AI in corporate finance, is more useful. Finance has its own readiness profile: the data lives in ERPs and spreadsheets, the processes are regulated, the tolerance for error is measured in basis points, and the team's technical skills range from "built a pivot table once" to "writes Python on weekends." A useful assessment needs to account for all of that.

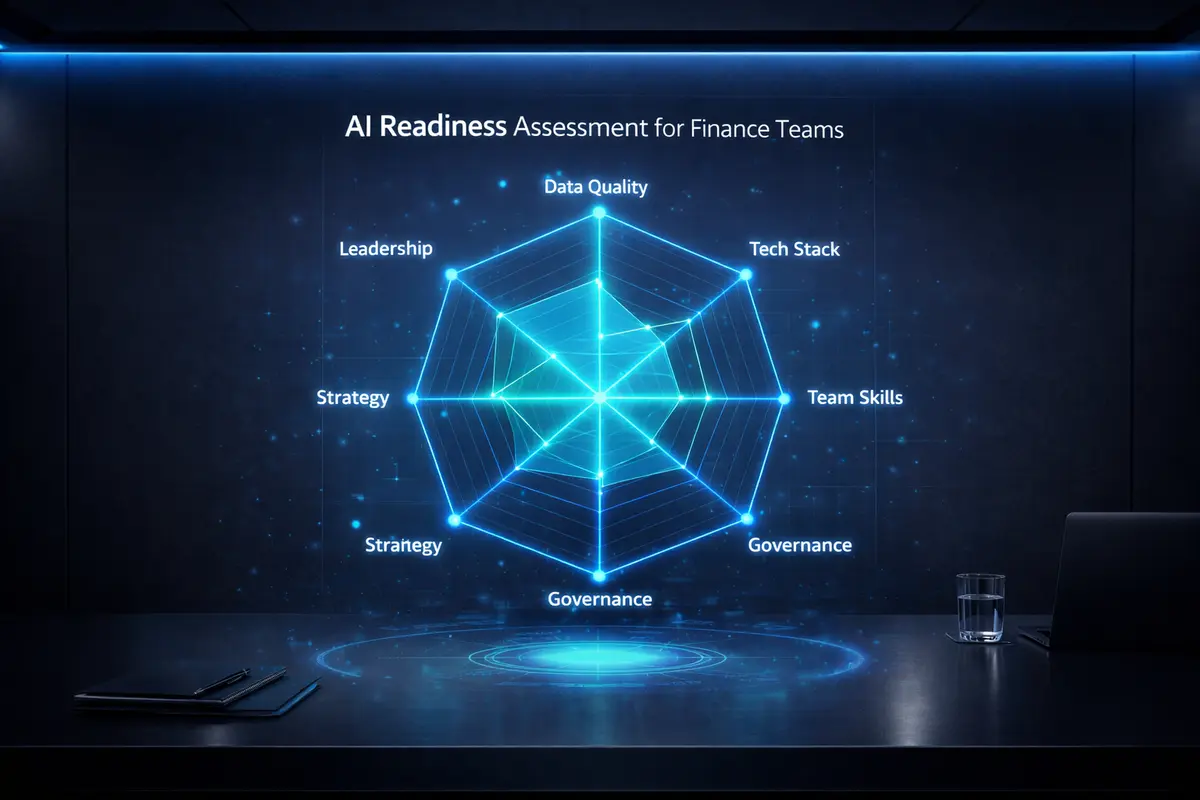

Here's how a finance-specific readiness framework breaks down. Eight dimensions, each scored independently, producing both a composite score and a radar chart that shows where the gaps are. The dimensions: Data Quality and Accessibility, Process Documentation and Standardization, Technology Stack Maturity, Team Skills and Adaptability, Leadership Alignment and Sponsorship, Governance and Controls, Change Management Readiness, and Vendor and Partner Ecosystem. Each one maps to a specific bottleneck that blocks or enables AI adoption in a finance function.

Data Quality and Accessibility is where most finance teams discover their real starting point. You might have clean GL data in your ERP, but what about the Excel workbooks your FP&A team maintains outside the system? The consolidation adjustments logged in email threads? The vendor master data that hasn't been scrubbed since 2019? AI models trained on inconsistent data produce inconsistent outputs. That's true in every domain, but in finance the inconsistency flows directly into reported numbers. Scoring this dimension honestly means auditing not just your system of record, but the shadow systems around it.

Process Documentation matters because AI agents need explicit workflow definitions to operate. If your close process lives in the heads of three senior accountants who've been running it for a decade, an agent can't replicate it. Industry benchmarks from The Thinking Company put financial services organizations in the 2.8 to 3.5 range on a five-point maturity scale for this dimension, which translates roughly to "some processes are documented, but critical knowledge remains tribal." Before you deploy AI into any workflow, you need that workflow written down: every step, every decision point, every exception path.

Technology Stack Maturity asks whether your existing systems can talk to AI tools. If your ERP is on a version that predates API-first architecture, or your reporting stack requires manual CSV exports to move data between systems, the integration cost of adding AI may exceed the automation benefit. Oracle's NetSuite AI Connector Service, released in August 2025, gives a useful benchmark: it exposes ERP data and business logic to any MCP-compatible AI client through permission-scoped sessions. If your ERP vendor offers something comparable, your tech stack scores well on this dimension. If your team is still uploading flat files, you have preliminary work to do.

Team Skills and Governance are the dimensions where the assessment diverges furthest from generic enterprise frameworks. A finance team doesn't need data scientists. It needs accountants and analysts who can evaluate AI-generated outputs critically, the same skill set they apply to reviewing a junior analyst's work, applied to a different kind of tool. Governance, meanwhile, means having clear policies about which data can interact with AI tools, who validates AI-generated outputs, and how you maintain audit trails. A purpose-built AI Governance Policy Generator can accelerate that process considerably. Only 23% of organizations have a formal AI strategy in place, per current benchmarks. For finance teams subject to SOX, GDPR, or industry-specific regulations, governance isn't a nice-to-have. It's the prerequisite that determines whether AI deployment creates value or creates liability.

The value of a structured assessment is that it replaces vague optimism with a specific action plan. Instead of "we should do something with AI," you get "our data quality scores 2.4, our governance scores 1.8, and here's the Crawl-Walk-Run sequence of work that moves both scores up before we attempt a pilot." RoboCFO's AI Readiness Scorecard runs this assessment across all eight dimensions in about ten minutes. You get a composite score, a radar chart showing your profile, and a Crawl-Walk-Run maturity classification. The free tier gives you the score and visualization. The full report adds a prioritized action plan, a 90-day pilot recommendation, and a 12-month phased roadmap you can hand to your board or PE sponsor.